Open table formats like Apache Iceberg and Delta Lake are changing how companies store analytical data – and who controls it. If you store your analytical data in a proprietary format, you’re locked in – to the engine, the tooling, the license costs. The data belongs to the company, not the engine.

Analytical systems are increasingly moving to the cloud; data warehouses, lakehouses and BI platforms run on object storage. To be clear: this is not about OLTP systems. The operational database stays exactly where it is. Open table formats address the analytical layer – where data is aggregated, transformed and turned into decisions.

If you ever want to switch the compute engine in that layer – for cost reasons, changing requirements or simply better alternatives – you can do so without migrating terabytes of data.

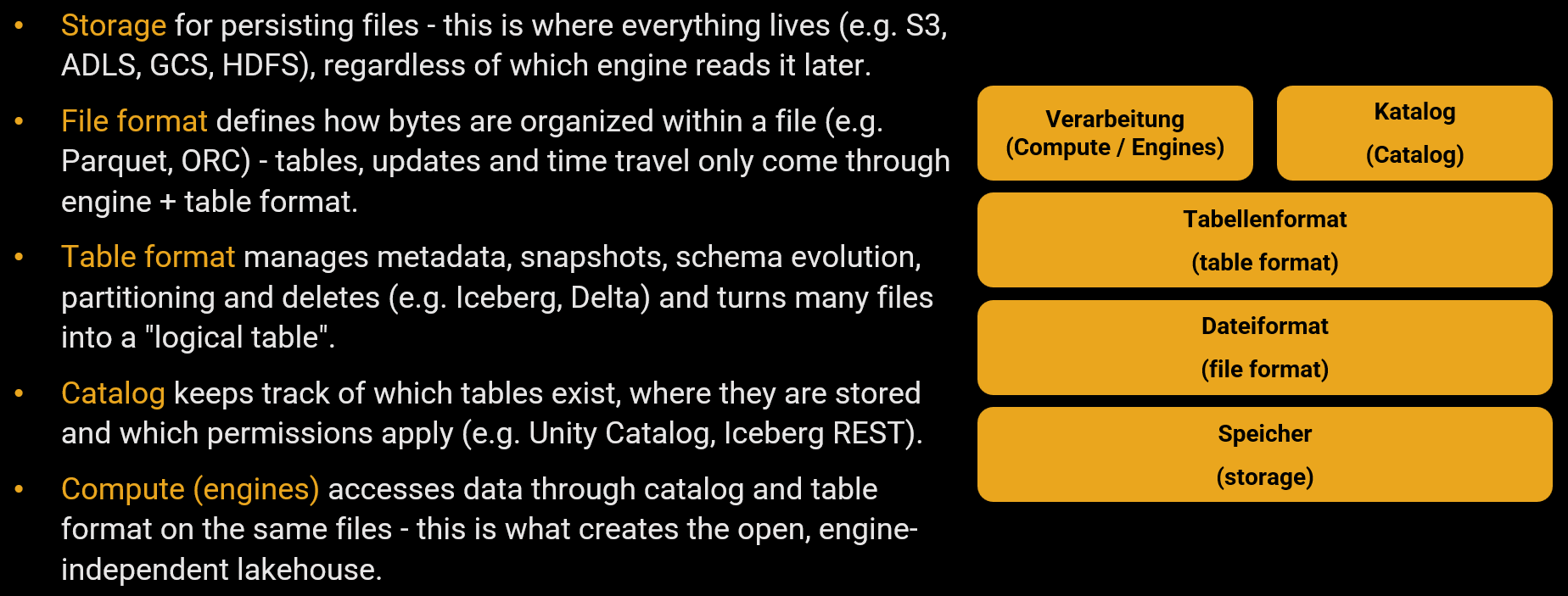

Three building blocks make this possible.

1. Parquet: The File Format Foundation

Apache Parquet stores data in a columnar, compressed format with embedded statistics – comparable to a column store in the database world. The format is open, vendor-neutral and has become the de facto standard for analytical data on object storage (S3, Azure Blob, GCS).

Parquet alone is not enough to form a table. It lacks schema evolution, time travel and transactional guarantees. A directory full of Parquet files is just a pile of files – not a table. This is exactly the gap that table formats close.

2. Delta Lake and Apache Iceberg: What Turns Parquet Files Into a Table

Both formats use Parquet as the data layer and add a metadata layer that turns files into a fully-fledged table – with schema evolution, time travel and ACID transactions at the table level. On top of that, data skipping through metadata-level statistics works similar to indexes, but automatically and without manual maintenance. Concepts that database professionals have known for decades now exist on the data lake as well.

The formats are converging. In practice: Iceberg has the broader engine ecosystem, Delta Lake the deeper Databricks integration. Both are built on Parquet – the data files are identical, only the metadata layer differs. In practice, the choice often depends more on the existing platform and governance stack than on general technical superiority.

3. The Catalog: Where Engines Find Their Tables

The fundamental paradigm shift: the same files can be read and written by different engines. Of course you can also use Iceberg and Delta Lake via Python, Java or Scala. But for database professionals: SQL is all you need.

Take DuckDB as an example – a lightweight, locally installable analytical database that reads and writes Iceberg tables directly via SQL:

-- Connect to Iceberg catalog

ATTACH 'my_warehouse' AS catalog (TYPE iceberg);

-- Create and populate a table

CREATE TABLE catalog.default.revenue (

region VARCHAR, quarter VARCHAR, amount DECIMAL(10,2)

);

INSERT INTO catalog.default.revenue

VALUES ('North', 'Q1', 125000.00), ('South', 'Q1', 98000.00);

DuckDB is just one of many engines. The same Iceberg table, the same star schema – queryable by different engines via SQL. The list keeps growing (as of 04/2026): Databricks and Spark support both Delta Lake and Iceberg natively, as do Trino, Dremio and Flink. Microsoft Fabric uses Delta Lake as its default format and can automatically expose Delta tables as Iceberg. Snowflake supports Iceberg. PostgreSQL can read Iceberg and Delta tables and write Iceberg tables via the open-source extension pg_lake. Oracle has been able to read Iceberg tables since version 23ai; read and write is available from 26ai onward, but only in the Autonomous Database in the cloud. Delta Lake is not supported by Oracle.

Multiple engines can work on the same data simultaneously – each one the best tool for its specific purpose. Spark for batch processing, Trino for interactive queries, DuckDB for local analysis, Power BI for reporting. That said, engine freedom is not the same as engine interchangeability. SQL dialects, optimizer behavior and supported features differ – testing across engines remains essential.

But how does an engine like DuckDB or PostgreSQL know where on the vast object storage the current version of a table lives? This is where the catalog comes in (e.g. Databricks’ Unity Catalog, the OneLake Catalog in Microsoft Fabric or implementations of the Iceberg REST Catalog API). If you know what a system catalog does, you know the principle: it acts as the data dictionary. The catalog holds the pointer to the latest metadata tree of a table. When multiple engines want to modify data concurrently, the catalog handles concurrency control: only whoever atomically updates the pointer completes their commit successfully. This is how the transactional promise is technically enforced in a distributed environment.

The complete stack at a glance:

Start With the Use Case, Not the Format

Open table formats are powerful – but they’re a means to an end, not the end itself. Before choosing any architecture component, the question is: What business problem are we solving?

This sounds obvious. In practice, it’s where most lakehouse initiatives go wrong. Teams pick a format because it’s trending, build a platform because the vendor demo looked impressive, and then go looking for data to put in it. The result: an expensive infrastructure that nobody uses. (For more on why letting go of old habits matters, see The Art Of Data Tech Unlearning.)

A use-case-first approach flips this around:

1. Start with the business question. “Which customer segments are most profitable after claims?” is a use case. “We need a lakehouse” is not.

2. Define the data product. What does the consumer need? A star schema for self-service BI? A curated dataset for a machine learning model? An aggregated feed for regulatory reporting? The use case determines the data model, the refresh frequency and the quality requirements.

3. Make the format an architecture decision, not a per-table decision. Choose one open table format for the platform – and stick with it. Mixing Iceberg and Delta Lake per table or per team is not simplicity, it’s complexity in disguise. The format is a guardrail, not a variable.

A practical example: An insurance company needs regulatory reports on solvency ratios, self-service BI for business users and a data foundation for churn prediction models. Three very different use cases – but the same Delta Lake tables on the same object storage serve all of them:

- Regulatory reporting uses time travel to reproduce any historical snapshot for audits. The use case demands historization, auditability and cost-efficient long-term storage – capabilities that Delta Lake provides out of the box.

- Self-service BI connects Power BI or Tableau directly to the star schema in the same Delta Lake layer. No data export, no separate cube – just a different engine on the same tables.

- Data science queries the identical Delta Lake tables via DuckDB or Spark for feature engineering. The team gets production data without a separate pipeline or copy.

One format, one storage layer, three engines. That’s the actual value proposition of open table formats: not format flexibility, but platform consistency with engine freedom.

Benefits and Boundaries

What open table formats deliver:

Being able to switch engines at any time means no longer being stuck on expensive licenses – for many companies, that alone is reason enough. But it’s not just about license costs – it’s about data sovereignty: keeping your data in open formats on your own storage means staying in control, regardless of what decisions a vendor makes tomorrow. That said, data sovereignty doesn’t end with the file format. It’s a much larger organizational challenge around having a choice where necessary. Open formats are the foundation – not the finish line. Expensive database licenses for purely analytical workloads become questionable when the same data is queryable from DuckDB or Trino. And because all engines work on the same files, redundant copies and the discrepancies between reports that come with them are eliminated. Evaluating a new BI tool or ML platform? Just point it at the existing Iceberg tables – no data export, no new pipeline. Parquet, Iceberg and Delta Lake are all under the Apache 2.0 license – no vendor can withdraw the format or make it proprietary. And if you’re worried about support: it comes from the platform vendor, not the open-source project – just like Linux.

Where the boundaries are:

Open formats don’t replace data modeling – a poorly designed star schema doesn’t get better by putting it on Iceberg. ACID is limited to single tables: foreign key constraints and cross-table transactions don’t exist. Governance (permissions, lineage, schema management) must be built separately in the catalog – and binding yourself to a catalog vendor risks a new lock-in, even though vendor-neutral standards like the Iceberg REST Catalog API are actively working to solve this. For a deeper look at how data strategy, governance, management and security relate to each other, see The Data House.

Conclusion

Open table formats are no longer hype – they’ve arrived in production. This matches my own experience from several years of productive use, including in a regulated industry where I’m personally responsible for the platform. These formats bring exactly the concepts to the data lake that database professionals have mastered for decades: transactions, clean schema management and governance. Those who plan a new cloud-based analytical platform today have the opportunity to store their data in an open format from the start. In an industry dominated by a handful of hyperscalers, reducing one-sided dependencies isn’t an ideological statement – it’s risk management. Not because the current engine is bad – but because the next one might be even better.