Data professional fundamentals have never been more valuable, yet for years the industry measured progress by volume: lines written, data products shipped, pipelines grown, dashboards created. AI has now exposed how little these metrics ever said about actual impact.

The real cost of software was never in the writing. It was always in the thinking behind it. Delays were rarely caused by coding speed but by missing clarity.

The PoC Illusion

AI-assisted coding has made one thing genuinely cheap: prototypes. A working proof-of-concept that would have taken a skilled developer two days now takes two hours. This sounds like pure progress – and in isolation, it is.

I used Claude to generate a layered lakehouse architecture for Formula 1 data.The generated code worked, but it had a critical architectural flaw: the core layer read from the mart layer – a complete inversion of the standard data gravity. When I pointed this out, Claude immediately agreed and corrected it. The result still produced numbers, but the architecture was wrong – exactly the kind of flaw that looks harmless in a PoC and becomes expensive months later.

The problem is that PoCs were never the challenging part. Production is.

Production means accountability, security, reliability, monitoring, compliance, data quality, operability, and the ability to change the system six months from now without it falling apart. None of those properties emerge automatically from generated code. They require decisions – and decisions require judgment.

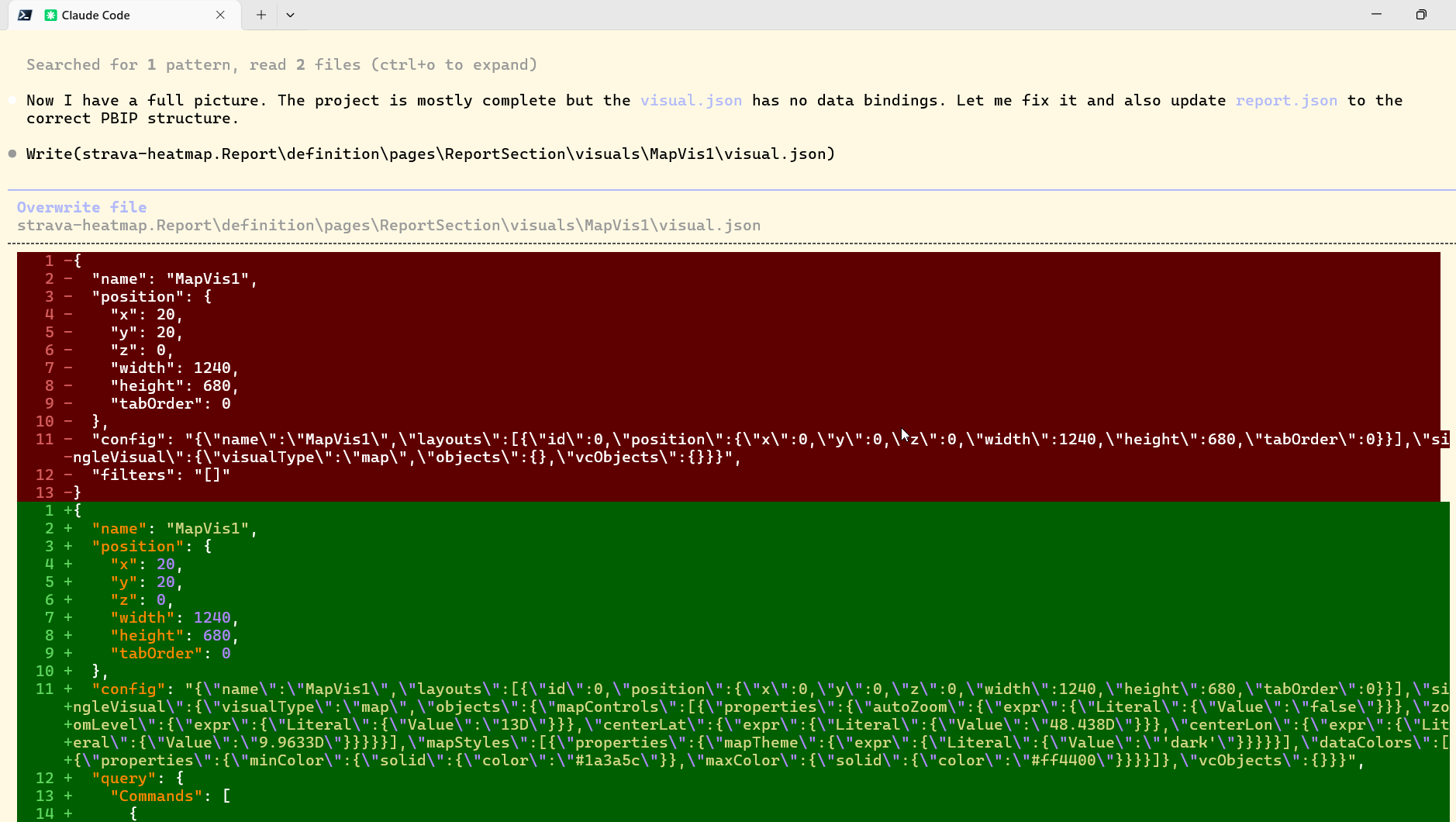

A recent experiment made this even clearer. Power BI has shifted from binary to a text-based, code-first format that a coding agent can generate directly. I tested Claude in the above mentioned Formula 1 lakehouse to build a complete Power BI solution this way – defining the metadata for data connections, transformations, and visual layouts directly in the .pbip structure. Because the code-first format (.pbip) is relatively new and training data is limited, Claude initially produced files with structural errors that required guidance to resolve as shown in the screenshot below. But here is the point worth sitting with: with that guidance, it worked. The agent learned the constraints, corrected its output, and delivered a functioning solution.

AI coding is not “almost solved”. For many practical use cases it is effectively solved – with the caveat that the quality of the output depends entirely on the quality of the constraints you provide. Human programmers make mistakes too. Human programmers need their time to learn and try new features too. The difference is that an AI agent with a well-specified project brief and clear acceptance criteria can often converge on a correct solution faster than most developers would. The remaining flaws are real but shrinking. What is not shrinking is the need for someone who understands the system well enough to provide that brief in the first place.

When AI flattens the cost of the PoC, it does not flatten the cost of everything that comes after. What it does is make the gap between “working demo” and “production system” more visible, and more politically inconvenient. Stakeholders see a prototype in two hours and reasonably ask why deployment takes two months. The honest answer – that the demo proves nothing about production readiness – has always been true. Now it is harder to avoid.

The Real Question Is Not “Who Types the Code?”

The shift that matters is not from human-typed code to AI-generated code. It is about who holds responsibility for what comes after the code exists.

In my daily context of financial services and insurance regulation, production readiness means traceable requirements, controlled change processes, auditable data lineage, defined ownership for incidents, cost accountability and evidence that the reported metrics match business definitions. AI can speed implementation, but it cannot assume responsibility for missing these verification layers. These are not AI-solvable problems today, and they may never be – not because AI will not improve, but because responsibility is not a technical artifact. These are accountability questions, and they are political before they are technical.

The scarcity is shifting. Code production is becoming a commodity. Even with perfect code generators, someone still needs to understand the system deeply enough to evaluate whether the output is correct, safe, and appropriate for the context.

Two Paths Forward – And One That Gets Overlooked

How roles adapt to this shift is not uniform. Two paths are widely discussed.

Engineers who thrive will move toward what you might call product engineering: leading with problem understanding, sharpening requirements, defining measurable outcomes, and using AI-assisted coding as an accelerator – not a replacement for judgment. Their value is no longer in how they write code but in what problems they choose to solve and why.

Business domain experts gain the ability to build directly, which is genuinely new. AI lowers the technical barrier enough that a finance analyst or operations specialist can assemble functional tools without a developer in the loop. The risk is the obvious one: being able to build does not mean knowing what to build, or what “good” looks like in production. Domain knowledge and requirements skill become the constraint, not technical skill.

What gets less attention is the third path – platform and enablement engineering. Somebody has to build the guardrails, the CI/CD pipelines, the security policies, the observability infrastructure, the model and prompt governance layer. As AI increases the pace at which code enters production, the need for robust guardrails around that code increases proportionally. Platform engineers are the ones who make it safe for everyone else to move fast.

Fundamentals Are Not Basics

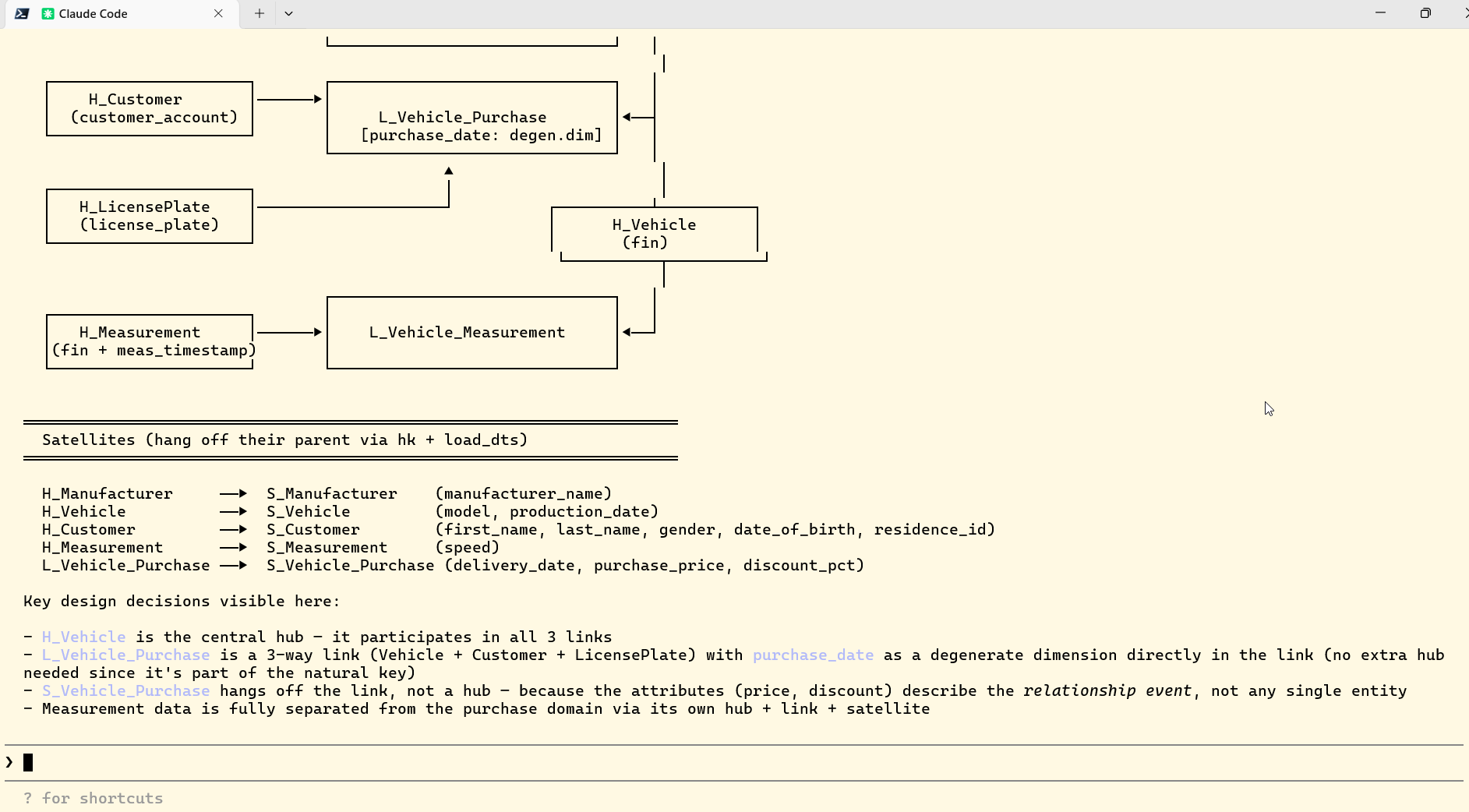

While teaching data management at DHBW, I assigned a modeling task and solved it with Claude side by side – here is what Claude produced:

When we reviewed the outputs together, the Star Schema was workable while the Data Vault attempt contained structural mistakes – such as using a volatile license plate as a business key or wrong relationship assumptions arguing with a degenerate dimension. The students learned quickly that recognizing correctness is not optional, because production demands vetted rules, stable identifiers and governance around meaning before any model reaches consuming teams.

There is a persistent confusion between “fundamentals” and “basics.” Basics are things you learn once and then stop thinking about: language syntax, basic SQL, git commands. Data professional fundamentals are the things that determine decision quality no matter how the tools change – not the tools themselves.

For data and software engineering, the fundamentals that matter in 2026 are the same ones that mattered in 2010 and will matter in future:

- Specify – Can you translate a business problem into a testable specification with explicit acceptance criteria?

- Prioritize – Can you set priorities effectively to create business value and make quick progress?

- Analyze – Can you spot the dangerous assumptions in AI-generated artifacts and reason about trade-offs before they become incidents?

- Communicate – Can you defend a choice, explain a trade-off, and align stakeholders around what matters – and what does not?

- Validate – Can you prove that the data reflects reality rather than just confirming the code did not crash – and define what “correct” looks like precisely enough that a machine or a colleague can verify it independently?

Prompting is an interface. The underlying skill is clarity – the ability to describe what you want precisely enough that something else (human, AI, or system) can produce it. That skill does not expire.

The Identity Shift No One Talks About Openly

Many engineers – and I include myself in this – have built a significant part of their professional identity around craft. The satisfaction of a well-structured query, a clean data model, an elegant pipeline. There is genuine meaning in that. When AI can produce competent code on demand, that source of identity is under pressure. This is worth naming directly, because the response to that pressure shapes how people adapt.

One response is to dig deeper into craft for its own sake, treating AI-generated code as inferior by definition. This is a losing strategy, and most engineers know it.

The more productive response is to relocate the source of pride from “I wrote clean code” to “I solved a problem that improved something for real users.” The craft is still there – it just moves upstream, into problem definition, requirements clarity, and system design, rather than downstream into implementation.

Product ownership is becoming a core engineering competency – not just a role that sits next to engineering. Engineers who internalize that shift early have a genuine advantage.

The shift that matters

The role of data professionals is not disappearing – it is being redefined around what AI cannot absorb: making and defending decisions, securing accountability, maintaining reliable operations, and separating mission-driven builds from distraction-driven noise. The mechanical parts of implementation are becoming a commodity. The judgment behind them is not. That is what data.KISS has always been about: clarity about where complexity is genuinely necessary – and the discipline to remove it where it is not.

The professionals who pull ahead are not the ones producing the most output, but the ones who can say what should not be built – and justify why.

If you want to start somewhere concrete: before your next AI-assisted task, write the acceptance criteria first. If you cannot, the problem is not ready for implementation – AI or otherwise. The AI assistant must be integrated into the daily process. There is no longer a place for manual copy-pasting of generated SQL, DAX formulas, dbt templates, or PySpark cells into your source code. Stop copy-pasting prompts and start treating it like a collaborator with a project brief – using structured context files (like CLAUDE.md or INSTRUCTIONS.md) that capture your architecture decisions, constraints, and quality standards. The agent does not know your production context. You do. That gap is exactly where fundamentals matter.